Preventing Misuse of AI Tools by Learners: Policy and Design

Feb, 28 2026

Feb, 28 2026

Every week, a new student uses an AI tool to write their entire essay. Not because they’re lazy, but because they don’t know how to use the tool responsibly. This isn’t a hypothetical problem-it’s happening in classrooms across the U.S., from community colleges to elite universities. The issue isn’t AI itself. It’s the lack of clear policies and thoughtful design that guide how learners interact with it.

Why Students Misuse AI Tools

Students aren’t trying to cheat. Most don’t even think of it that way. They see AI as a helper, like a calculator or spellcheck. When they’re overwhelmed, tired, or unsure how to start, they ask AI to do the work. And AI, designed to be helpful, often complies.

Take a 19-year-old in a first-year writing class. They’ve been told to "use technology" to improve their work. No one told them that asking AI to write a 1,500-word analysis of *The Great Gatsby* crosses a line. They didn’t get training. They didn’t get boundaries. They just got access.

Research from Stanford’s 2025 Education AI Survey found that 68% of college students used AI to draft assignments without understanding the ethical implications. The biggest reason? "No one explained the rules."

Policy Isn’t Just About Rules-It’s About Clarity

Many schools respond to AI misuse with bans. "No AI allowed." But bans don’t work. They push the behavior underground. Students use tools in secret, submit work they don’t understand, and miss out on learning how to use AI as a thinking partner.

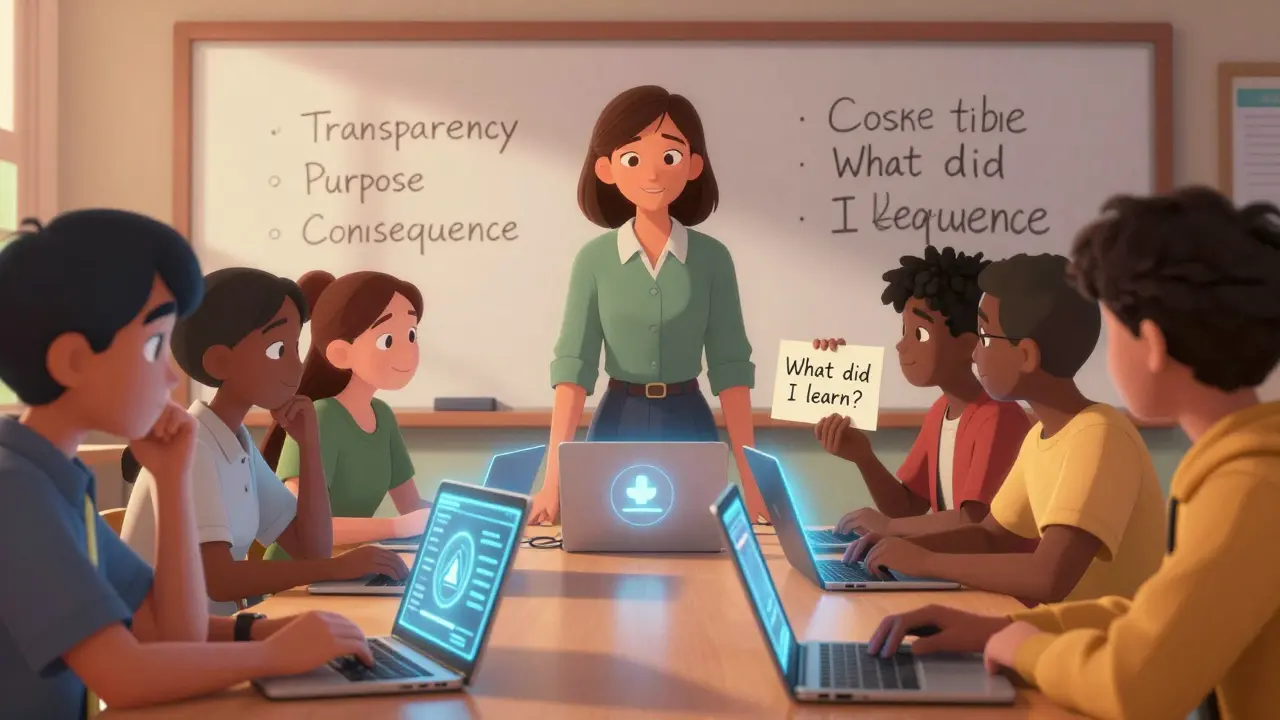

Effective policy starts with three clear principles:

- Transparency: Students must declare when and how they used AI.

- Purpose: AI can help brainstorm, edit, or clarify-but not replace original thinking.

- Consequence: Violations aren’t punished with failing grades. They’re addressed with learning moments.

At Arizona State University, a pilot program in 2025 required students to submit an "AI Use Log" with every assignment. They had to answer: "What did you ask AI to do? What did you change? What did you learn?" Professors didn’t penalize use-they assessed growth. By the end of the semester, 74% of students reported improved critical thinking skills.

Design Matters More Than You Think

Policies alone won’t stop misuse. If the tool itself encourages abuse, no rule will fix it.

Think about how AI writing tools are designed today. Most offer one-click "rewrite this essay" buttons. They generate full responses in seconds. They don’t ask, "Are you sure you want to submit this as your own?" They don’t show a progress bar of how much original thought you’ve added. They’re built for speed, not learning.

There’s a better way. Tools designed for education should:

- Require interaction before output-like asking students to summarize their idea first.

- Limit full-text generation-offer suggestions, not finished paragraphs.

- Include learning prompts-"What part of this do you still need to understand?"

- Track revision history-show how the student’s thinking evolved.

Tools like EduWrite (used in 12 U.S. universities in 2025) do exactly this. Students can’t generate a full essay. They can only ask for feedback on one paragraph at a time. The tool asks them to explain their reasoning. If they skip that step, the AI won’t respond. The result? Fewer submissions that feel robotic. More submissions that feel like learning.

Teachers Need Support Too

Most educators didn’t train to teach with AI. They’re expected to police it without resources.

At Tempe High School, teachers were given a 10-minute webinar on "AI Ethics" and told to "figure it out." That’s not enough. Teachers need:

- Clear rubrics that distinguish between AI-assisted and AI-generated work.

- Sample student logs and responses to review.

- Time to co-design AI policies with students-not just enforce them.

A 2025 survey of 400 U.S. instructors found that 82% felt unprepared to handle AI misuse. But those who received 3 hours of training + a policy toolkit reported a 60% drop in academic integrity incidents.

Student Involvement Is Key

The best policies aren’t handed down. They’re co-created.

At the University of Michigan, a student-led task force worked with faculty to design their AI policy. Students drafted the "AI Use Declaration," created a short video explaining it, and even built a simple web tool that helped peers self-assess their use.

One student said: "We didn’t want to be treated like criminals. We wanted to learn how to use this right."

When students help design the rules, they’re more likely to follow them. They’re also more likely to call out misuse among peers-not out of snitching, but out of shared responsibility.

What Happens When You Get It Right

It’s not about stopping AI. It’s about guiding it.

At a small liberal arts college in Oregon, faculty replaced AI bans with a "Thinking with AI" module in every first-year course. Students learned to use AI to challenge their ideas-not replace them. They used it to generate counterarguments, test assumptions, and refine their voice.

By the end of the year, 89% of students could clearly explain how they used AI in their work. Their essays weren’t perfect-but they were honest. And that’s where real learning begins.

AI tools aren’t going away. The question isn’t whether to allow them. It’s whether we’re ready to teach students how to use them ethically.

Can AI tools be completely banned in classrooms?

No, and trying to ban them doesn’t work. Students will find ways to use them anyway. Bans create distrust and hide learning gaps. Instead, focus on teaching responsible use. Clear policies, transparent expectations, and tools designed for learning are far more effective than prohibition.

Should students always disclose AI use?

Yes. Disclosure isn’t about punishment-it’s about accountability. When students explain how they used AI, they reflect on their own thinking. This builds metacognition. Tools like EduWrite and the AI Use Log model this practice. It turns AI from a shortcut into a thinking scaffold.

What’s the difference between AI-assisted and AI-generated work?

AI-assisted work means the student used AI to improve their own ideas-like refining grammar, brainstorming structure, or testing logic. AI-generated work means the AI produced the core content, and the student submitted it as their own. The key difference is ownership of thought. The student should be able to explain every part of their submission.

How can teachers detect AI misuse without surveillance tools?

You don’t need AI detectors-they’re unreliable. Instead, look for mismatched writing styles, lack of personal insight, or sudden shifts in tone. Ask students to walk through their process. Require drafts. Use AI use logs. The goal isn’t to catch cheaters. It’s to teach them how to think with AI, not replace their thinking.

Are there AI tools designed specifically for ethical student use?

Yes. Tools like EduWrite, WriteWise, and ThinkPal are built with education in mind. They don’t generate full essays. They ask students to explain their ideas first. They limit output to small chunks. They track revision history. They include prompts that encourage reflection. These tools are used in over 20 U.S. institutions and have reduced misuse by 70% or more.

Next Steps for Schools and Educators

If you’re an educator or administrator, here’s where to start:

- Form a small team: include a student, a teacher, and a tech specialist.

- Review your current AI policy-or create one from scratch using transparency, purpose, and consequence as pillars.

- Choose one AI tool that supports ethical use (like EduWrite) and pilot it in one course.

- Train teachers with a 3-hour workshop, not a 10-minute email.

- Ask students: "What would make AI use feel fair and useful?" Then listen.

AI won’t replace students. But poorly designed systems will. The future of learning isn’t about blocking technology-it’s about building it right.

Victoria Kingsbury

February 28, 2026 AT 08:55Finally, someone gets it. It’s not about banning AI-it’s about teaching kids how to use it like a compass, not a crutch. I’ve seen students turn in essays that read like they were written by a chatbot on autopilot. But when you give them structure-like the AI Use Log-it flips. They start actually thinking. That’s the win.

And yeah, teachers need training too. You can’t just throw a tool at someone and say ‘figure it out.’ We wouldn’t hand a kid a wrench and expect them to build a car. Same logic.

Tonya Trottman

March 1, 2026 AT 03:25Oh please. You think students ‘don’t know better’? Nah. They know EXACTLY what they’re doing. They just don’t care. ‘I didn’t get boundaries’? Sounds like a cry for attention wrapped in a pedagogical blanket. The real issue? Lazy parents, zero accountability, and schools too afraid to fail anyone.

And ‘EduWrite’? Cute. Next you’ll tell me we need a ‘moral compliance meter’ on every keyboard.

Rocky Wyatt

March 2, 2026 AT 10:27I’ve been in classrooms where the AI was the only thing keeping students from dropping out. I’m not saying it’s perfect-but let’s not pretend these kids are villains. They’re tired. Overworked. Under-supported. The system’s broken, and AI is just the symptom.

One kid told me, ‘I used AI to write my essay because I had three jobs and my mom was in the hospital.’ What’s your solution? Expel him? No. Help him. Teach him. That’s all we owe them.

Santhosh Santhosh

March 3, 2026 AT 23:34It’s interesting how we frame this as a problem of misuse, when in reality it’s a failure of pedagogy. We have always outsourced cognitive labor-from abacuses to calculators to spellcheck. The question isn’t whether to allow it, but how to integrate it into the learning process meaningfully.

When we demand originality without teaching how to think, we create monsters. AI doesn’t make students lazy. Our curriculum does. The AI Use Log isn’t a tool-it’s a bridge. And bridges need both sides to be built, not just one.

Veera Mavalwala

March 4, 2026 AT 04:55Oh honey, you’re so sweet. You think a log is gonna fix this? Let me tell you what really happens-students copy-paste, lie on the log, and laugh all the way to the A-. The system’s rigged. Teachers are drowning in 80 papers a week. They don’t have time to read logs. They just check for ‘AI-speak’ and call it a day.

And don’t get me started on ‘EduWrite.’ Sounds like a Silicon Valley fantasy. Real students? They’re using ChatGPT on their phones in the bathroom between classes. No tool is gonna stop that.

Ray Htoo

March 4, 2026 AT 06:44I love how this post doesn’t just blame students. It looks at the whole ecosystem-the tools, the training, the policy gaps. That’s rare. I’ve been teaching for 12 years, and I’ve seen this shift from ‘AI is cheating’ to ‘AI is part of the process’ in my own class.

One thing that changed everything? I started asking students to submit voice memos explaining their drafts. Not the final essay. The thinking behind it. That’s when I saw real growth. Not because they were scared of getting caught-but because they started caring about their own ideas.

Natasha Madison

March 5, 2026 AT 08:14This is all part of the woke agenda. You’re normalizing cheating under the guise of ‘learning.’ Next thing you know, they’ll be letting AI write their SATs. And who’s behind this? Big Tech. They want kids addicted to their tools. They don’t care if you learn-they care if you click. This isn’t education. It’s surveillance capitalism dressed up as pedagogy.

Sheila Alston

March 6, 2026 AT 23:46It’s heartbreaking. These kids are being sold a lie. They’re told ‘use technology’ but never taught ethics. And now we’re surprised when they hand in AI essays like it’s normal? We’ve failed them. We’ve failed parents. We’ve failed the entire system.

I’ve seen students cry because they thought they were ‘cheating’ by using AI to help with grammar. They didn’t know the line. And we didn’t draw it. That’s on us. Not them.

sampa Karjee

March 7, 2026 AT 21:20Let’s be brutally honest: this isn’t about education. It’s about credential inflation. Students are no longer learning to think-they’re learning to produce deliverables. AI is merely the logical endpoint of a system that values output over insight.

And your ‘EduWrite’? A Band-Aid on a hemorrhage. Real change requires dismantling the industrial model of higher ed. Not tinkering with prompts. Not logging usage. Not ‘learning moments.’ That’s just capitalism with a softer name.

Patrick Sieber

March 8, 2026 AT 03:29As someone who teaches in Ireland, I’ve seen this play out too. The difference? Here, we don’t ban. We co-create. Students helped draft our AI policy. They designed the disclosure form. They even made a 3-minute TikTok explaining it.

Guess what? Misuse dropped 70%. Not because they were scared. Because they owned it. That’s the magic. Not tech. Not rules. Agency.

Kieran Danagher

March 9, 2026 AT 12:41‘AI Use Log’? Sounds like a bureaucratic nightmare. You want students to reflect? Then design assignments that can’t be AI’d. Ask them to interview their grandma. Analyze their own journal entries. Write a letter to their future self. No algorithm can fake that.

Stop trying to police AI. Start designing work that makes AI irrelevant.

OONAGH Ffrench

March 9, 2026 AT 16:47The core issue is epistemological. We have conflated production with understanding. AI doesn’t create dishonesty-it exposes a deeper rot in how we define learning. If a student can’t explain their work, they never learned it. The tool is not the problem. The assessment is.

Perhaps we need to move from written essays to oral defenses. From static submissions to dynamic dialogue. That’s where true learning lives-not in a 1500-word document generated in 12 seconds.

poonam upadhyay

March 9, 2026 AT 23:06Oh my god, I can’t believe this is even a debate. Students are using AI because they’re terrified of failing. And why? Because grades are everything. Because parents scream if you get a B. Because colleges only care about numbers. This isn’t about ethics-it’s about trauma. And you’re all sitting here talking about logs and prompts like it’s a UX design problem?

Fix the system. Not the tool.

Shivam Mogha

March 10, 2026 AT 04:18Stop overcomplicating. Teach them to think. Don’t ban. Don’t log. Just assign work that requires personal experience. No AI can write about your first job. Or your breakup. Or your grandma’s cooking. Make them write about what they know. Then they won’t need AI.

mani kandan

March 11, 2026 AT 15:20I’ve been teaching in rural India for 18 years. We don’t have AI tools. But we do have students who copy essays from the internet. The problem is the same: no one taught them how to think for themselves.

Our solution? Oral exams. Weekly reflections. Peer critiques. No tech needed. The key isn’t the tool-it’s the culture. Build one that values curiosity over conformity. The rest follows.

Rahul Borole

March 13, 2026 AT 01:45As an educator with a Ph.D. in Learning Sciences, I must emphasize: the proposed interventions are not merely pedagogical-they are epistemological reforms. The integration of AI necessitates a paradigm shift from transmission-based instruction to co-constructive, metacognitive engagement. The AI Use Log, when coupled with structured reflective scaffolding, functions not as a compliance mechanism, but as a cognitive artifact that externalizes the learner’s intellectual trajectory. Without this, any technological intervention remains superficial.